You’ve probably heard of Kaggle data science competitions, but did you know Kaggle has a plethora of other features that can assist you with your next machine learning project? Kaggle allows you to access public datasets shared by others and share your own datasets if you are looking for datasets for your next machine learning project. Kaggle also provides an in-browser notebook environment and some free GPU hours for those looking to build and train their own machine learning models. You can also view other people’s public notebooks!

In addition to the website, Kaggle has a command-line interface (CLI) that you can use to access and download datasets from within the command line.

Let’s get started and see what Kaggle has to offer!

You will learn the following after completing this tutorial:

- What exactly is Kaggle?

- How to incorporate Kaggle into your machine learning pipeline

- Using the Command Line Interface of the Kaggle API (CLI)

Let’s get started!

Overview

His tutorial is divided into five sections, which are as follows:

- What is Kaggle?

- Setting up Kaggle Notebooks

- Using Kaggle Notebooks with GPUs/TPUs

- Using Kaggle Datasets with Kaggle Notebooks

- Using Kaggle Datasets with the Kaggle CLI tool

What is Kaggle?

Kaggle is probably best known for the data science competitions it hosts, some of which offer five-figure prize pools and attract hundreds of teams. Aside from these competitions, Kaggle users can also publish and search for datasets for use in machine learning projects. To use these datasets, you can use Kaggle notebooks in your browser or Kaggle’s public API to download datasets that you can then use for machine learning projects.

Kaggle also has some courses and a discussion page where you can learn more about machine learning and interact with other machine learning practitioners!

The remainder of this article will concentrate on how we can use Kaggle’s datasets and notebooks to assist us in working on our own machine learning projects or finding new projects to work on.

Setting up Kaggle Notebooks

To begin using Kaggle Notebooks, you must first create a Kaggle account, either by using an existing Google account or by using your email address.

Then navigate to the “Code” page.

You will then be able to see both your own notebooks and public notebooks created by others. Click on New Notebook to start your own notebook.

This will generate your new notebook, which will look similar to a Jupyter notebook and contain many similar commands and shortcuts.

By going to File -> Editor Type, you can also switch between a notebook editor and a script editor.

Changing the editor type to script reveals the following:

Using Kaggle Notebooks with GPUs/TPUs

Who doesn’t appreciate having free GPU time for machine learning projects? GPUs can significantly accelerate the training and inference of machine learning models, particularly deep learning models.

Kaggle provides some free GPU and TPU allocations that you can use for your projects. At the time of writing, GPUs are available 30 hours per week and TPUs are available 20 hours per week after verifying your account with a phone number.

To connect an accelerator to your notebook, go to Settings -> Environment-> Preferences.

You will be asked to provide a phone number to verify your account.

Then you’re taken to this page, which lists the amount of availability you have left and mentions that turning on GPUs reduces the number of CPUs available, so it’s probably only a good idea when doing neural network training/inference.

Using Kaggle Datasets with Kaggle Notebooks

Machine learning projects are data-hungry beasts, and finding datasets for current projects or starting new projects is always a chore. Fortunately, Kaggle has a large collection of datasets submitted by users and from competitions. These datasets can be a gold mine for people looking for data for their current machine learning project or new project ideas.

Let’s see how we can incorporate these datasets into our Kaggle notebook.

First, on the right sidebar, click Add data.

A window should appear with some publicly available datasets and the option to upload your own dataset for use with your Kaggle notebook.

For this tutorial, I’ll be using the classic Titanic dataset, which you can find by typing your search terms into the search bar at the top right of the window.

After that, the dataset is ready for use by the notebook. To access the files, take a look at the path for the file and prepend ../input/{path}.

For example, the titanic dataset’s file path is:

../input/titanic/train_and_test2.csv

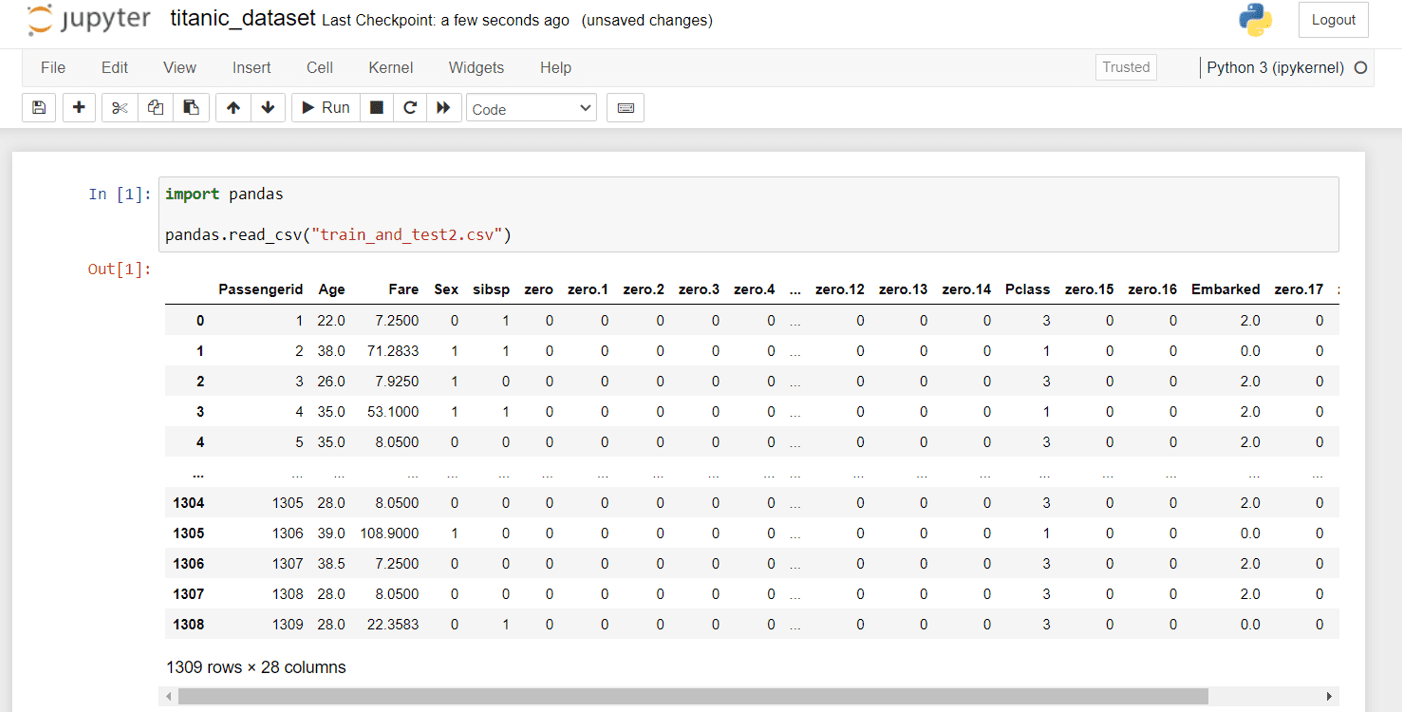

We can read the data in the notebook by typing:

import pandas

pandas.read_csv("../input/titanic/train_and_test2.csv")

This retrieves the following data from the file:

Using Kaggle Datasets with the Kaggle CLI tool

Kaggle also has a public API with a CLI tool that we can use to download datasets, interact with competitions, and do a variety of other things. We’ll look at how to use the CLI tool to set up and download Kaggle datasets.

To begin, install the CLI tool by following these steps:

pip install kaggle

You may require the following for Mac/Linux users:

pip install --user kaggle

Then, for authentication, you’ll need to generate an API token. Go to Kaggle’s homepage, click on your profile icon in the upper right corner, and then select Account.

Scroll down until you see Create New API Token:

This will download a kaggle.json file, which you will use to log in to the Kaggle CLI tool. For it to work, you must place it in the proper location. For Linux/Mac/Unix-based operating systems, this should be placed at ~/.kaggle/kaggle.json, and for Windows users, it should be placed at C:\Users\<Windows-username>\.kaggle\kaggle.json. If you put it in the wrong place and run Kaggle from the command line, you’ll get the following error:

OSError: Could not find kaggle.json. Make sure it’s location in … Or use the environment method

Let us now begin downloading those datasets!

To find datasets based on a search term, such as Titanic, we can use:

kaggle datasets list -s titanic

When we search for Titanic, we get:

$ kaggle datasets list -s titanic ref title size lastUpdated downloadCount voteCount usabilityRating ----------------------------------------------------------- --------------------------------------------- ----- ------------------- ------------- --------- --------------- datasets/heptapod/titanic Titanic 11KB 2017-05-16 08:14:22 37681 739 0.7058824 datasets/azeembootwala/titanic Titanic 12KB 2017-06-05 12:14:37 13104 145 0.8235294 datasets/brendan45774/test-file Titanic dataset 11KB 2021-12-02 16:11:42 19348 251 1.0 datasets/rahulsah06/titanic Titanic 34KB 2019-09-16 14:43:23 3619 43 0.6764706 datasets/prkukunoor/TitanicDataset Titanic 135KB 2017-01-03 22:01:13 4719 24 0.5882353 datasets/hesh97/titanicdataset-traincsv Titanic-Dataset (train.csv) 22KB 2018-02-02 04:51:06 54111 377 0.4117647 datasets/fossouodonald/titaniccsv Titanic csv 1KB 2016-11-07 09:44:58 8615 50 0.5882353 datasets/broaniki/titanic titanic 717KB 2018-01-30 04:08:45 8004 128 0.1764706 datasets/pavlofesenko/titanic-extended Titanic extended dataset (Kaggle + Wikipedia) 134KB 2019-03-06 09:53:24 8779 130 0.9411765 datasets/jamesleslie/titanic-cleaned-data Titanic: cleaned data 36KB 2018-11-21 11:50:18 4846 53 0.7647059 datasets/kittisaks/testtitanic test titanic 22KB 2017-03-13 15:13:12 1658 32 0.64705884 datasets/yasserh/titanic-dataset Titanic Dataset 22KB 2021-12-24 14:53:06 1011 25 1.0 datasets/abhinavralhan/titanic titanic 22KB 2017-07-30 11:07:55 628 11 0.8235294 datasets/cities/titanic123 Titanic Dataset Analysis 22KB 2017-02-07 23:15:54 1585 29 0.5294118 datasets/brendan45774/gender-submisson Titanic: all ones csv file 942B 2021-02-12 19:18:32 459 34 0.9411765 datasets/harunshimanto/titanic-solution-for-beginners-guide Titanic Solution for Beginner's Guide 34KB 2018-03-12 17:47:06 1444 21 0.7058824 datasets/ibrahimelsayed182/titanic-dataset Titanic dataset 6KB 2022-01-27 07:41:54 334 8 1.0 datasets/sureshbhusare/titanic-dataset-from-kaggle Titanic DataSet from Kaggle 33KB 2017-10-12 04:49:39 2688 27 0.4117647 datasets/shuofxz/titanic-machine-learning-from-disaster Titanic: Machine Learning from Disaster 33KB 2017-10-15 10:05:34 3867 55 0.29411766 datasets/vinicius150987/titanic3 The Complete Titanic Dataset 277KB 2020-01-04 18:24:11 1459 23 0.64705884

We can use the following commands to download the first dataset in that list:

kaggle datasets download -d heptapod/titanic --unzip

Similarly to the Kaggle notebook example, reading the file with the Jupyter notebook yields:

Of course, some datasets are so large that you might not want to keep them on your own hard drive. Nonetheless, this is one of Kaggle’s free resources for machine learning projects!

Summary

You learned what Kaggle is, how to use it to get datasets, and even how to get some free GPU/TPU instances within Kaggle Notebooks. You’ve also seen how the Kaggle API’s CLI tool can be used to download datasets for use in our local environments.

You specifically learned:

- What exactly is Kaggle?

- How to Use Kaggle Notebooks with GPU/TPU Accelerators

- How to use Kaggle datasets in Kaggle notebooks or download them using Kaggle’s command-line interface (CLI).

Source link