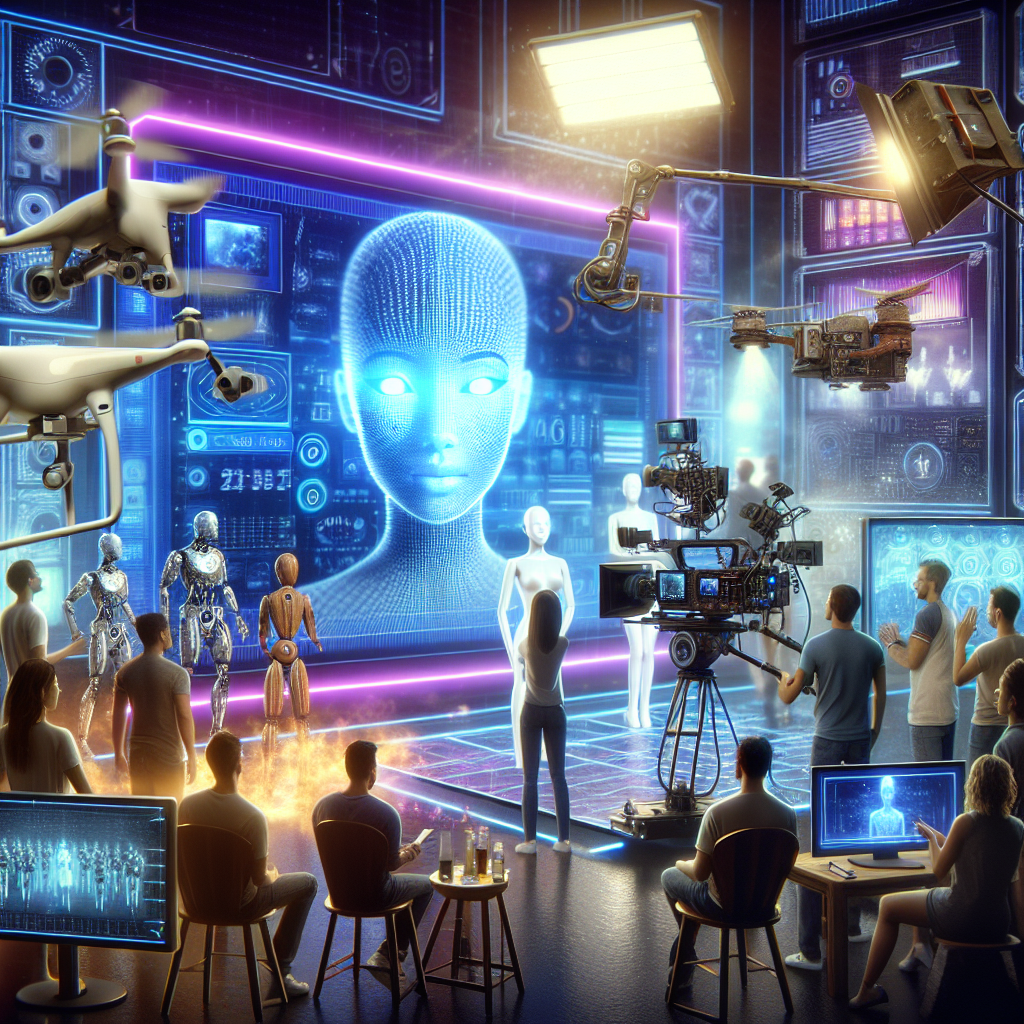

The Hollywood writer’s and actor’s strike of the previous year was about several issues, including residual payments and fair remuneration, but one issue stood out above the rest: the invasion of people’s livelihoods by generative AI, or artificial intelligence that can create text, images, and videos. It seemed inevitable that generative AI would be used in the media we consume—from movies and TV shows to vast tracts of unsavory information on the internet. However, this has unlocked a Pandora’s box. But because the development, use, and adoption of this technology will happen so quickly, the rallying cry at the time was that any defense gained against businesses utilizing AI to cut corners was a win, even if it was only for a three-year contract.

No bluster there. The average social media user has almost likely come across AI-generated content, whether they are aware of it or not, in the short months since the writers’ and performers’ guilds struck landmark agreements with the Alliance of Motion Picture and Television Producers (AMPTP). The notoriously resistant and obtuse US Congress has been notified of efforts to stop pornographic AI deepfakes of celebrities. A Kate Middleton AI deepfake movie appeared, to many, to be a fair conclusion because the internet is now so full of conspiracy theories and false information, and the development of generative AI has so destroyed what little remained of shared reality. (Just to be clear, it happened.) Producer Tyler Perry postponed a $800 million expansion of his Atlanta studios because he believed that “jobs are going to be lost” after Hollywood execs examined OpenAI’s upcoming text-to-video application Sora.

In summary, a lot of people are, and rightfully so, afraid or at best cautious. Thus, it is even more important to pay attention to the smaller-scale AI conflicts rather than seeing them through a pessimistic lens. Because generative AI has quietly made modest appearances in television and films, some of which may be creative and others of which may be threatening, in the midst of all the major news about deepfakes including Taylor Swift and the possible end of jobs. The usage of AI in lawful contexts and inside artistic endeavors has increased dramatically in the last few weeks, raising questions about what is morally acceptable and testing the waters to see what audiences will notice or take in.

The latest season of True Detective caused a minor stir on social media when it came to AI-generated band posters. This was in response to certain viewers’ displeasure with the same kind of small AI-generated interstitials in the indie horror movie Late Night With Devil. “It’s so depressing up there that some kid with AI made the posters for a loser Metal festival for boomers,” Issa López, the showrunner of True Detective, stated on X. “It was discussed. Ad nauseam.”) Both of these cases have that eerie glossy AI look, similar to the AI-generated credits of the Marvel television series Secret Invasion from 2023. Similarly, advertising posters for A24’s upcoming film Civil War feature famous American landmarks—like the Marina Towers in Chicago or the bombed-out Sphere in Las Vegas—that have been destroyed by a fictional internal conflict, but they also have hallmark AI flaws (cars with three doors, etc.).

Cinephiles have taken issue with the use of artificial intelligence (AI enhancement, as opposed to generative AI) to enhance—or, depending on your point of view, oversaturate and destroy—preexisting films, such James Cameron’s True Lies, for new DVD and Blu-ray releases. With Henry Cavill and Margot Robbie playing fictitious James Bond characters and the trailer clearly and openly marked AI, it has amassed over 2.6 million views on YouTube as of this writing.

The most worrisome development of all was what the website Futurism revealed about what looked like artificial intelligence (AI)-generated or altered “photos” of Jennifer Pan, the woman who was found guilty of killing her parents in 2010 for sale, in the recently released Netflix real crime documentary. When Pan’s high school friend Nam Nguyen describes Pan as having a “bubbly, happy, confident, and very genuine” personality, the images—which first appear around the 28-minute mark of the movie—are utilized to emphasize this point. With his fingers spaced strangely, his mouth twisted into a smile, and his peculiar, overly bright sheen, Pan is laughing, making the peace sign, and grinning broadly. In a Toronto Star interview, director Jeremy Grimaldi stated, “Any film-maker will use different tools, like Photoshop, in films.” He did not explicitly affirm or deny this statement. “Those are actual images of Jennifer. She is in the foreground. For source protection, the backdrop has been made anonymous. A remark was requested, but Netflix did not reply.

Grimaldi does not specify which instruments were utilized to “anonymize” the background or why Pan’s teeth and fingers appear distorted. Even so, it’s still a concerning revelation because it raises the possibility that Pan’s old pictures are fake and that a visual archive may not actually exist. This is especially true if generative AI was not employed. That would be an outright archive lie if it were generative AI. The Archival Producers Alliance, a group of documentary producers, recently released a set of best practices that, while supporting the use of AI for minor image restoration or tinkering, strongly discourages creating new content, modifying original sources, or doing anything that would “change their meaning in ways that could mislead the audience.”

The rising consensus on what uses of AI are appropriate or unacceptable in TV and movies, in my opinion, stems from this last point: deceiving the audience. In the absence of an explicit response, it’s unclear with what tools the “photos” in What Jennifer Did were altered. This reminds me of the controversy surrounding the use of artificial intelligence (AI) in some of Anthony Bourdain’s voice in the 2021 documentary Roadrunner, which overshadowed a nuanced examination of a complex figure regarding disclosure—or lack thereof. Although the use of AI in that movie was astounding, it revived evidence rather than produced it; the problem lay in the way we learned about it, which was after the fact.

Once more, we find ourselves debating minute aspects whose creation seems crucial to take into account—which is precisely the case. Although it’s odd and a waste of time, an overtly AI-generated trailer for a fictitious James Bond film is at least transparent about what it’s trying to say. Making AI posters for exhibitions when a hired artist might be present seems like a cop-out, an inch given away, and a depressingly granted expectation. It would be evidently unethical at best and genuinely deceptive at worst for AI to be employed to create a false historical record. Taken separately, these are all little occurrences of the line that we’re all attempting to locate in real time. All of these together make the need to locate it feel even more pressing.