HQL, or Hive Query Language, is a simple but powerful SQL-like querying language that allows users to perform data analytics on large datasets. Because of its SQL-like syntax, HQL has gained popularity among data engineers and is simple to learn for those new to big data and Hive.

In this article, we will run several HQL commands on a “big data” dataset containing information on customers, their purchases, InvoiceID, country of origin, and many other things. These parameters will assist us in better understanding our customers and making more effective and sound business decisions.

For execution, we will use the Beeline command-line interface, which runs queries through HiveServer2.

Next, we enter the following command to connect to HiveServer2.

!connect jdbc:hive2://m02.itversity.com:10000/;auth=noSasl

It requires authentication, so we enter our username and password for this session, as well as the location or path to our database. The commands for this are listed below (in red).

set hive.metastore.warehouse.dir = /user/username/warehouse;

We can now query our database because we are connected to HiveServer2. We begin by creating our database “demo01” and then enter the command to use it.

Use demo01;

Using the following command, we will now list all of the tables in the demo01 database.

show tables;

As we can see above, the demo01 database already contains two tables: “emp” and “t1.” So, for our customer dataset, we’ll make a new table called “customers.”

CREATE TABLE IF NOT EXISTS customers (InvoiceNo VARCHAR(255),Stock_Code VARCHAR(255),Description VARCHAR(255),Quantity INT,UnitPrice DECIMAL(6,2),CustomerID INT,Country VARCHAR(255)) ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' ;

When we run the “show tables” command, we get the following results.

In the demo01 database, we can see that a table called customers has been created. Using the following command, we can also view the table’s schema.

desc customers;

Using this command, we now upload our new.csv file containing customer records to our hdfs storage.

hdfs dfs -put new.csv /user/itv001775/warehouse/demo01.db/customers

This data must now be loaded into the customer’s table that we created earlier. To accomplish this, we execute the following command.

load data inpath '/user/itv001775/warehouse/demo01.db/customers/new.csv' into table customers;

This concludes the section in which we uploaded the data to hdfs and loaded it into the customer’s table in the demo01 database.

Now we’ll do some data eyeballing, which means we’ll look at the data and see what insights we can glean from it. Because the dataset contains over 580,000 records, we will use this command to look at the first 5 records for convenience.

select * from customers limit 5;

As seen above, there are seven columns: invoiceno, stock code, description, quantity, unitprice, customerid, and country. Each column adds value and insights to the data analysis we will conduct next.

Data Analysis through HQL

CASE 1: We want to insert the records of a customer from Germany with customerid-18443 who purchased a Gaming PC, invoice number 610221, stock code 3427AB, quantity 2 at a unit price of 9000 into the customer’s table.

Query

insert into customers values (‘610221’,’3427AB’,’Gaming PC’,2,9000,18443,’Germany’);

We can now query the database to see if the record was successfully inserted.

select * from customers limit 5;

As can be seen, a record has been inserted.

CASE 2: We want to see the total of each customer’s purchases, as well as the invoiceno, in descending order.

Query: (for convenience we limit our output to the first 10 records)

select customerid, sum(unitprice) as total_purchase from customers group by customerid order by total_purchase desc limit 10;

In the query above, we are grouping our records based on the same customer ids and ordering the results by the total purchase made by each customer.

Aside from customers who do not have a customerid, we can determine our top ten customers based on the amount of their purchase. This can be extremely beneficial in identifying and targeting potential customers who will be profitable for businesses.

CASE 3: We’d like to know the average price of bags sold to our customers.

Query:

select avg(unitprice) as average_bagPrice from customers where description like '%BAG%';

It’s worth noting that in the preceding query, we used the “like” logical operator to retrieve the text from the description field. The “% ” symbol indicates that anything can come before or after the word “bag” in the text.

We can see that the average price across the entire range of products sold under the bag tag is 2.35. (dollars, euros, or any other currency). The same can be said for other articles that can assist businesses in determining price ranges for their products in order to increase sales output.

CASE 4: We want to count the number of products sold as well as the average price of those products for the top ten countries in descending order by count.

Query:

select count(*) as number_of_products, sum(unitprice) as totalsum_of_price, country from customers group by country order by totalsum_of_price desc limit 10;

In this case, count(*) means counting all of the records separately for each country and sorting the output by the total sum of the prices of goods sold in that country.

We can infer from this query which countries businesses should target the most because the total revenue generated from these countries is the highest.

CASE 5: show the customerid and the total number of products ordered for each customer with a quantity greater than 10, ordered descendingly by quantity for the top 20 customers.

Query:

select customerid, sum(quantity) as number_of_products from customers group by customerid having number_of_products>10 order by number_of_products desc limit 20;

We are grouping each customer’s records by their id and determining the number of products they purchased in descending order of that statistic. We also use the condition that only records with more than ten products are chosen.

When we want to specify a condition, we always use the “having” clause with the group by.

This allows us to identify our top customers based on the number of products they ordered. Customers who order the most generate the most profit for the company and should thus be scouted and pursued the most, and this analysis assists us in finding them efficiently.

Bonus

Hive has an amazing feature that allows us to sort our data using the “Sort by” clause. It nearly serves the same purpose as the “order by” clause in that it arranges the data in ascending or descending order. The main difference between these commands is how they work.

We know that in Hive, the HQL queries we write are converted into MapReduce jobs to abstract the complexity and make it more user-friendly.

As a result, when we run a query like:

Select customerid, unitprice from customers sort by customerid;

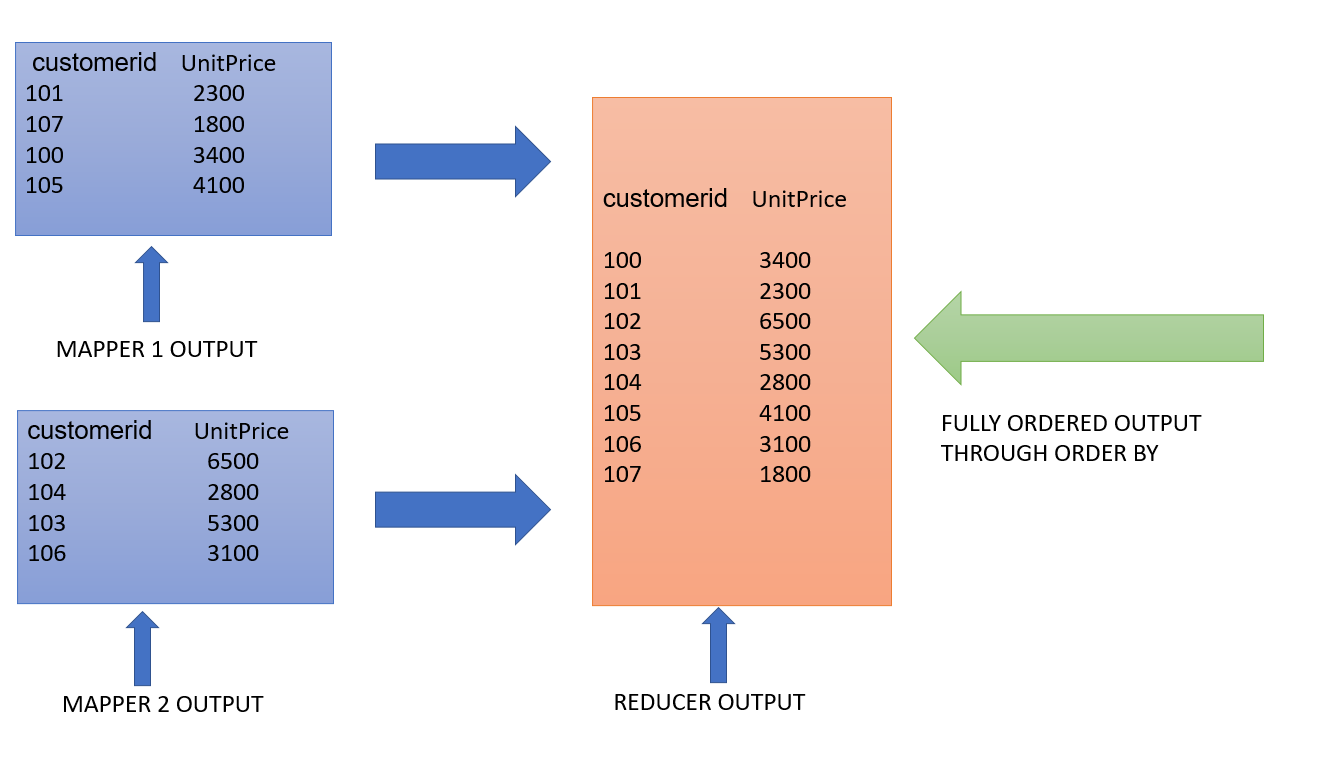

For the MapReduce job, multiple mappers and reducers are deployed. The use of multiple reducers, which is the key difference here, should be highlighted.

Multiple mappers send their data to their respective reducers, where it is sorted by the column specified in the query, in this case, customerid. The final output contains appended data from all reducers, resulting in partial data sorting.

In order by, multiple mappers and only one reducer are used. When a single reducer is used, the data passed on from the mappers is completely sorted.

Select customerid, unitprice from customers order by customerid;

The data clearly shows the difference in Reducer output. As a result, “order by” guarantees complete data order, whereas “sort by” returns partially ordered results.

Conclusion

We learned how to run HQL queries and extract insights from our customer dataset for data analytics in this article. These insights are valuable business intelligence that can help drive business decisions.

Source link