In this article, we dive into how PyTorch’s Autograd engine performs automatic differentiation.

PyTorch is one of the foremost python deep learning libraries out there. It’s the go to choice for deep learning research, and as each days passes by, more and more companies and research labs are adopting this library.

In this series of tutorials, we will be introducing you to PyTorch, and how to make the best use of the libraries as well the ecosystem of tools built around it. We’ll first cover the basic building blocks, and then move onto how you can quickly prototype custom architectures. We will finally conclude with a couple of posts on how to scale your code, and how to debug your code if things go awry.

Automatic Differentiation

A lot of tutorial series on PyTorch would start begin with a rudimentary discussion of what the basic structures are. However, I’d like to instead start by discussing automatic differentiation first.

Automatic Differentiation is a building block of not only PyTorch, but every DL library out there. In my opinion, PyTorch’s automatic differentiation engine, called Autograd is a brilliant tool to understand how automatic differentiation works. This will not only help you understand PyTorch better, but also other DL libraries.

Modern neural network architectures can have millions of learnable parameters. From a computational point of view, training a neural network consists of two phases:

- A forward pass to compute the value of the loss function.

- A backward pass to compute the gradients of the learnable parameters.

The forward pass is pretty straight forward. The output of one layer is the input to the next and so forth.

Backward pass is a bit more complicated since it requires us to use the chain rule to compute the gradients of weights w.r.t to the loss function.

A toy example

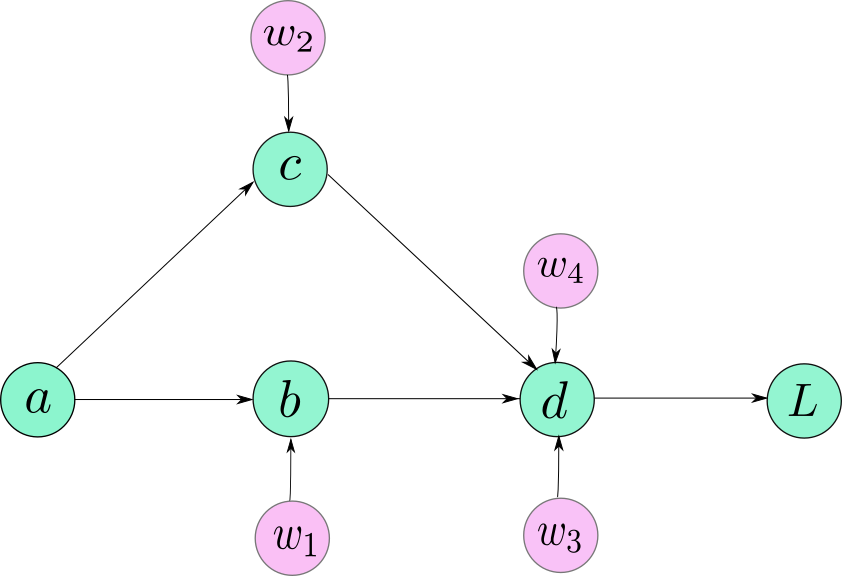

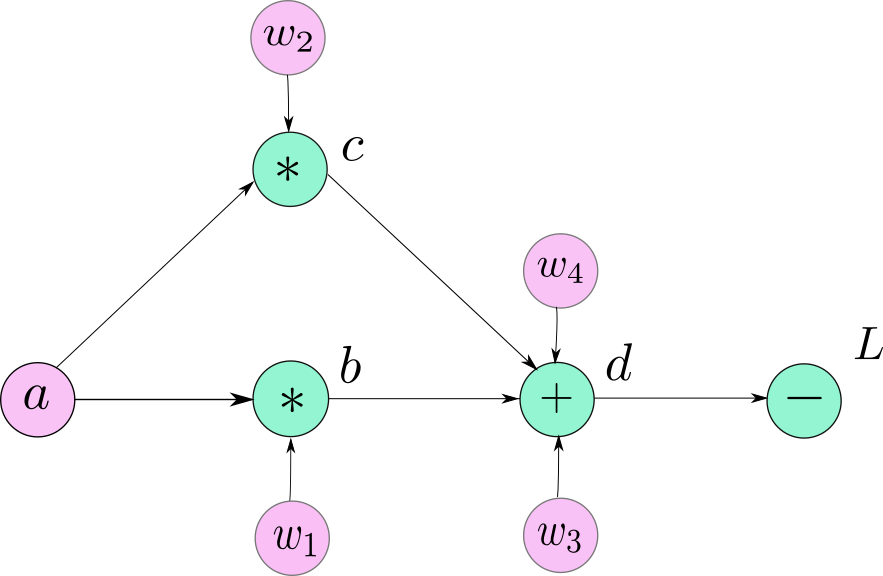

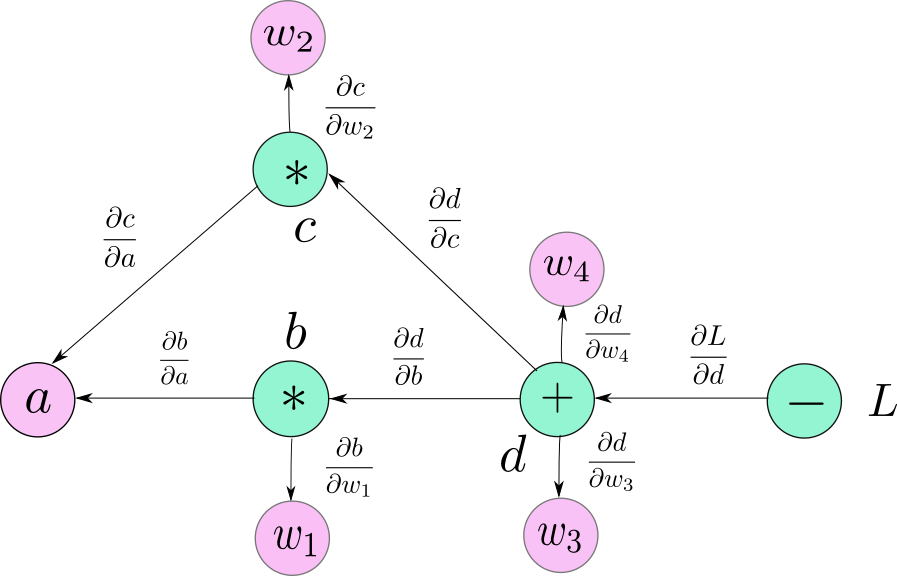

Let us take an very simple neural network consisting of just 5 neurons. Our neural network looks like the following.

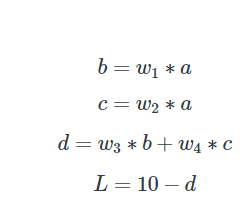

The following equations describe our neural network.

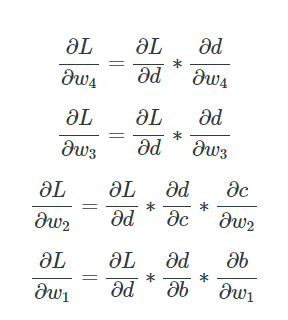

Let us compute the gradients for each of the learnable parameters ww.

All these gradients have been computed by applying the chain rule. Note that all the individual gradients on the right hand side of the equations mentioned above can be computed directly since the numerators of the gradients are explicit functions of the denominators.

Computation Graphs

We could manually compute the gradients of our network as it was very simple. Imagine, what if you had a network with 152 layers. Or, if the network had multiple branches.

When we design software to implement neural networks, we want to come up with a way that can allow us to seamlessly compute the gradients, regardless of the architecture type so that the programmer doesn’t have to manually compute gradients when changes are made to the network.

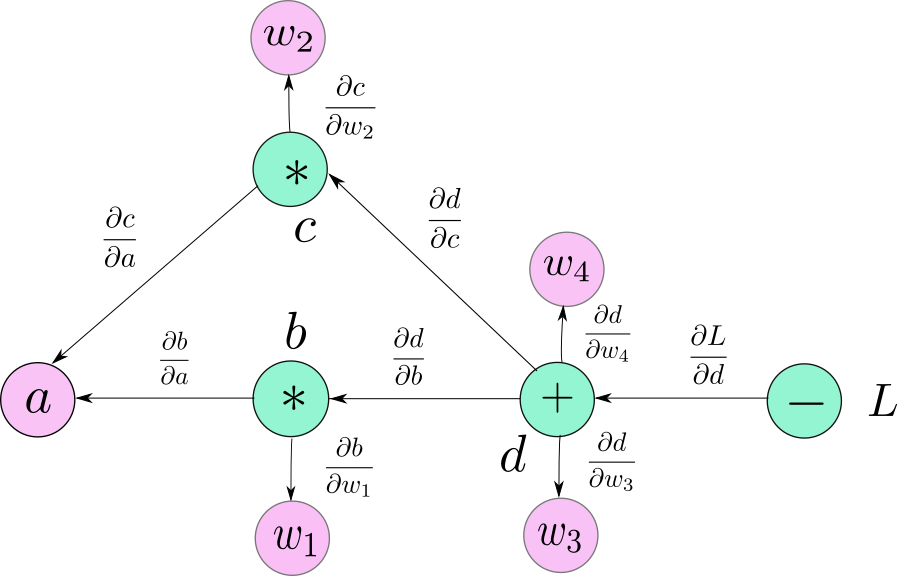

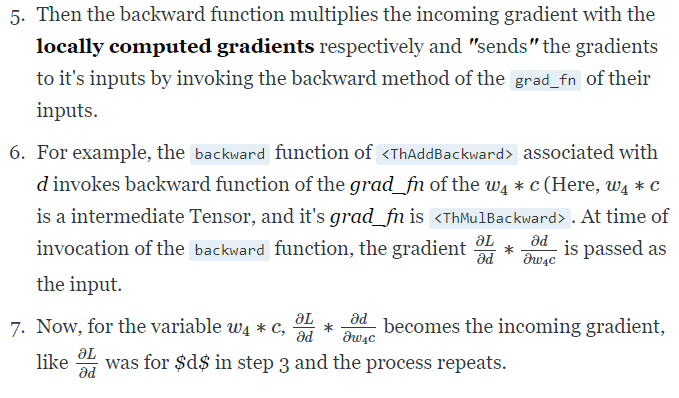

We galvanise this idea in form of a data structure called a Computation graph. A computation graph looks very similar to the diagram of the graph that we made in the image above. However, the nodes in a computation graph are basically operators. These operators are basically the mathematical operators except for one case, where we need to represent creation of a user-defined variable.

Notice that we have also denoted the leaf variables a,w1,w2,w3,w4a,w1,w2,w3,w4 in the graph for sake of clarity. However, it should noted that they are not a part of the computation graph. What they represent in our graph is the special case for user-defined variables which we just covered as an exception.

The variables, b,c and d are created as a result of mathematical operations, whereas variables a, w1, w2, w3 and w4 are initialised by the user itself. Since, they are not created by any mathematical operator, nodes corresponding to their creation is represented by their name itself. This is true for all the leaf nodes in the graph.

Computing the gradients

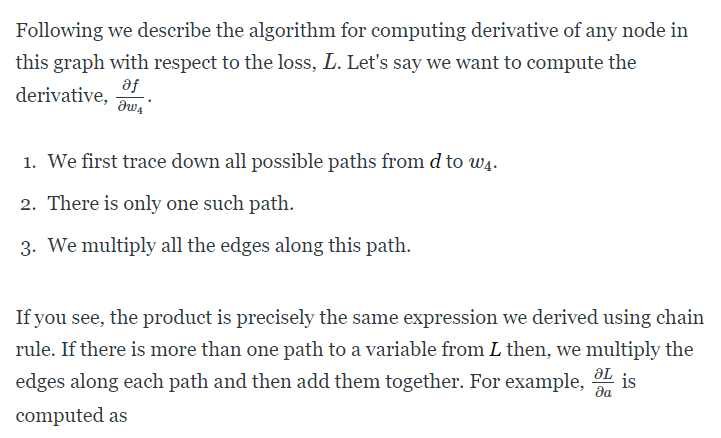

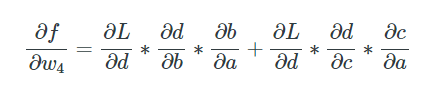

Now, we are ready to describe how we will compute gradients using a computation graph.

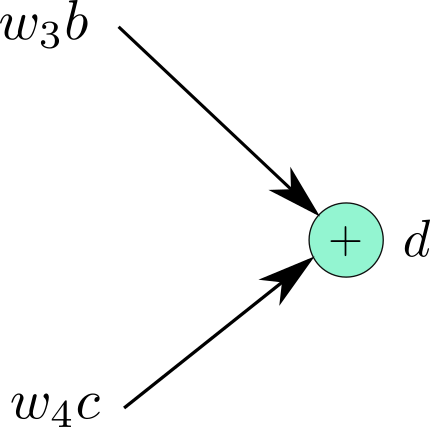

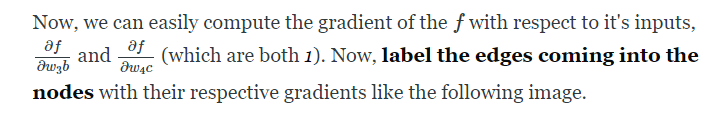

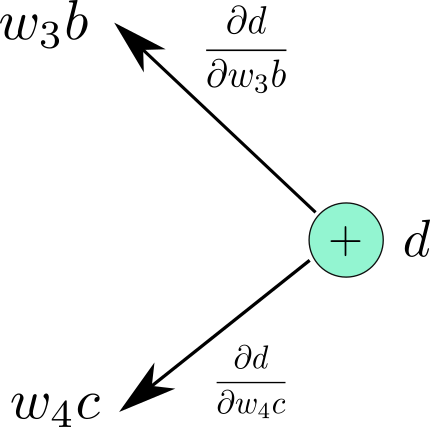

Each node of the computation graph, with the exception of leaf nodes, can be considered as a function which takes some inputs and produces an output. Consider the node of the graph which produces variable d from

Therefore we can write,

We do it for the entire graph. The graph looks like this.

PyTorch Autograd

Now we get what a computational graph is, let’s get back to PyTorch and understand how the above is implemented in PyTorch.

Tensor

Tensor is a data structure which is a fundamental building block of PyTorch. Tensors are pretty much like numpy arrays, except that unlike numpy, tensors are designed to take advantage of parallel computation capabilities of a GPU. A lot of Tensor syntax is similar to that of numpy arrays.

In [1]: import torch

In [2]: tsr = torch.Tensor(3,5)

In [3]: tsr

Out[3]:

tensor([[ 0.0000e+00, 0.0000e+00, 8.4452e-29, -1.0842e-19, 1.2413e-35],

[ 1.4013e-45, 1.2416e-35, 1.4013e-45, 2.3331e-35, 1.4013e-45],

[ 1.0108e-36, 1.4013e-45, 8.3641e-37, 1.4013e-45, 1.0040e-36]])One it’s own, Tensor is just like a numpy ndarray. A data structure that can let you do fast linear algebra options. If you want PyTorch to create a graph corresponding to these operations, you will have to set the requires_grad attribute of the Tensor to True.

The API can be a bit confusing here. There are multiple ways to initialise tensors in PyTorch. While some ways can let you explicitly define that the requires_grad in the constructor itself, others require you to set it manually after creation of the Tensor.

>> t1 = torch.randn((3,3), requires_grad = True)

>> t2 = torch.FloatTensor(3,3) # No way to specify requires_grad while initiating

>> t2.requires_grad = Truerequires_grad is contagious. It means that when a Tensor is created by operating on other Tensors, the requires_grad of the resultant Tensor would be set True given at least one of the tensors used for creation has it’s requires_grad set to True.

Each Tensor has a something an attribute called grad_fn, which refers to the mathematical operator that create the variable. If requires_grad is set to False, grad_fn would be None.

import torch

a = torch.randn((3,3), requires_grad = True)

w1 = torch.randn((3,3), requires_grad = True)

w2 = torch.randn((3,3), requires_grad = True)

w3 = torch.randn((3,3), requires_grad = True)

w4 = torch.randn((3,3), requires_grad = True)

b = w1*a

c = w2*a

d = w3*b + w4*c

L = 10 - d

print("The grad fn for a is", a.grad_fn)

print("The grad fn for d is", d.grad_fn)

If you run the code above, you get the following output.

The grad fn for a is None

The grad fn for d is <AddBackward0 object at 0x1033afe48>One can use the member function is_leaf to determine whether a variable is a leaf Tensor or not.

Function

All mathematical operations in PyTorch are implemented by the torch.nn.Autograd.Function class. This class has two important member functions we need to look at.

The first is it’s forward function, which simply computes the output using it’s inputs.

The backwardfunction takes the incoming gradient coming from the the part of the network in front of it. As you can see, the gradient to be backpropagated from a function f is basically the gradient that is backpropagated to f from the layers in front of it multiplied by the local gradient of the output of f with respect to it’s inputs. This is exactly what the backward function does.

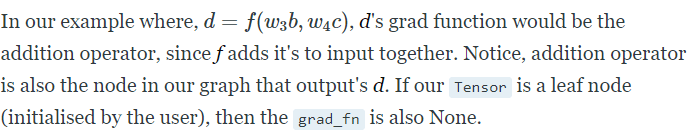

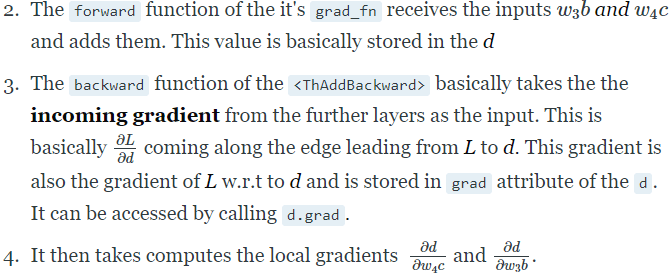

Let’s again understand with our example of

1.d is our Tensor here. It’s grad_fnis <ThAddBackward>. This is basically the addition operation since the function that creates d adds inputs.

Algorithmically, here’s how backpropagation happens with a computation graph. (Not the actual implementation, only representative)

def backward (incoming_gradients):

self.Tensor.grad = incoming_gradients

for inp in self.inputs:

if inp.grad_fn is not None:

new_incoming_gradients = //

incoming_gradient * local_grad(self.Tensor, inp)

inp.grad_fn.backward(new_incoming_gradients)

else:

pass

Here, self.Tensor is basically the Tensor created by Autograd.Function, which was d in our example.

Incoming gradients and local gradients have been described above.

In order to compute derivatives in our neural network, we generally call backward on the Tensor representing our loss. Then, we backtrack through the graph starting from node representing the grad_fn of our loss.

As described above, the backward function is recursively called through out the graph as we backtrack. Once, we reach a leaf node, since the grad_fnis None, but stop backtracking through that path.

One thing to note here is that PyTorch gives an error if you call backward() on vector-valued Tensor. This means you can only call backward on a scalar valued Tensor. In our example, if we assume a to be a vector valued Tensor, and call backward on L, it will throw up an error.

import torch

a = torch.randn((3,3), requires_grad = True)

w1 = torch.randn((3,3), requires_grad = True)

w2 = torch.randn((3,3), requires_grad = True)

w3 = torch.randn((3,3), requires_grad = True)

w4 = torch.randn((3,3), requires_grad = True)

b = w1*a

c = w2*a

d = w3*b + w4*c

L = (10 - d)

L.backward()Running the above snippet results in the following error.

RuntimeError: grad can be implicitly created only for scalar outputs

This is because gradients can be computed with respect to scalar values by definition. You can’t exactly differentiate a vector with respect to another vector. The mathematical entity used for such cases is called a Jacobian, the discussion of which is beyond the scope of this article.

There are two ways to overcome this.

If you just make a small change in the above code setting L to be the sum of all the errors, our problem will be solved.

import torch

a = torch.randn((3,3), requires_grad = True)

w1 = torch.randn((3,3), requires_grad = True)

w2 = torch.randn((3,3), requires_grad = True)

w3 = torch.randn((3,3), requires_grad = True)

w4 = torch.randn((3,3), requires_grad = True)

b = w1*a

c = w2*a

d = w3*b + w4*c

# Replace L = (10 - d) by

L = (10 -d).sum()

L.backward()

Once that’s done, you can access the gradients by calling the grad attribute of Tensor.

Second way is, for some reason have to absolutely call backward on a vector function, you can pass a torch.ones of size of shape of the tensor you are trying to call backward with.

# Replace L.backward() with

L.backward(torch.ones(L.shape))Notice how backward used to take incoming gradients as it’s input. Doing the above makes the backward think that incoming gradient are just Tensor of ones of same size as L, and it’s able to backpropagate.

In this way, we can have gradients for every Tensor , and we can update them using Optimisation algorithm of our choice.

w1 = w1 - learning_rate * w1.grad

And so on.

How are PyTorch’s graphs different from TensorFlow graphs

PyTorch creates something called a Dynamic Computation Graph, which means that the graph is generated on the fly.

Until the forward function of a Variable is called, there exists no node for the Tensor (it’sgrad_fn) in the graph.

a = torch.randn((3,3), requires_grad = True) #No graph yet, as a is a leaf

w1 = torch.randn((3,3), requires_grad = True) #Same logic as above

b = w1*a #Graph with node `mulBackward` is created.

The graph is created as a result of forward function of many Tensors being invoked. Only then, the buffers for the non-leaf nodes allocated for the graph and intermediate values (used for computing gradients later. When you call backward, as the gradients are computed, these buffers (for non-leaf variables) are essentially freed, and the graph is destroyed ( In a sense, you can’t backpropagate through it since the buffers holding values to compute the gradients are gone).

Next time, you will call forward on the same set of tensors, the leaf node buffers from the previous run will be shared, while the non-leaf nodes buffers will be created again.

If you call backward more than once on a graph with non-leaf nodes, you’ll be met with the following error.

RuntimeError: Trying to backward through the graph a second time, but the buffers have already been freed. Specify retain_graph=True when calling backward the first time.This is because the non-leaf buffers gets destroyed the first time backward() is called and hence, there’s no path to navigate to the leaves when backward is invoked the second time. You can undo this non-leaf buffer destroying behaviour by adding retain_graph = True argument to the backward function.

loss.backward(retain_graph = True)If you do the above, you will be able to backpropagate again through the same graph and the gradients will be accumulated, i.e. the next you backpropagate, the gradients will be added to those already stored in the previous back pass.

This is in contrast to the Static Computation Graphs, used by TensorFlow where the graph is declared before running the program. Then the graph is “run” by feeding values to the predefined graph.

The dynamic graph paradigm allows you to make changes to your network architecture during runtime, as a graph is created only when a piece of code is run.

This means a graph may be redefined during the lifetime for a program since you don’t have to define it beforehand.

This, however, is not possible with static graphs where graphs are created before running the program, and merely executed later.

Dynamic graphs also make debugging way easier since it’s easier to locate the source of your error.

Some Tricks of Trade

requires_grad

This is an attribute of the Tensor class. By default, it’s False. It comes handy when you have to freeze some layers, and stop them from updating parameters while training. You can simply set the requires_grad to False, and these Tensors won’t participate in the computation graph.

Thus, no gradient would be propagated to them, or to those layers which depend upon these layers for gradient flow requires_grad. When set to True, requires_grad is contagious meaning even if one operand of an operation has requires_grad set to True, so will the result.

torch.no_grad()

When we are computing gradients, we need to cache input values, and intermediate features as they maybe required to compute the gradient later.

While, we are performing inference, we don’t compute gradients, and thus, don’t need to store these values. Infact, no graph needs to be create during inference as it will lead to useless consumption of memory.

PyTorch offers a context manager, called torch.no_grad for this purpose.

with torch.no_grad:

inference code goes here No graph is defined for operations executed under this context manager.

Conclusion

Understanding how Autograd and computation graphs works can make life with PyTorch a whole lot easier. With our foundations rock solid, the next posts will detail how to create custom complex architectures, how to create custom data pipelines and more interesting stuff.

[ad_2]

This article has been published from the source link without modifications to the text. Only the headline has been changed.